Create EKS Self-Managed Node Group¶

This section will guide you through creating a self-managed node group.

Fast Forward

If you already have a node group for your cluster, expand this box to fast-forward.

- Proceed to the Verify section.

What You’ll Need¶

- A configured management environment.

- An existing EKS cluster.

- Access to the AWS console, if you are going to create a self-managed node group.

Procedure¶

Download the CloudFormation stack for self-managed node groups below:

amazon-eks-nodegroup.yaml1 AWSTemplateFormatVersion: "2010-09-09" 2 3 Description: Amazon EKS - Node Group 4-674 4 5 Metadata: 6 "AWS::CloudFormation::Interface": 7 ParameterGroups: 8 - Label: 9 default: EKS Cluster 10 Parameters: 11 - ClusterName 12 - ClusterControlPlaneSecurityGroup 13 - Label: 14 default: Worker Node Configuration 15 Parameters: 16 - NodeGroupName 17 - NodeAutoScalingGroupMinSize 18 - NodeAutoScalingGroupDesiredCapacity 19 - NodeAutoScalingGroupMaxSize 20 - NodeInstanceType 21 - NodeImageIdSSMParam 22 - NodeImageId 23 - NodeVolumeSize 24 - NodeExtraEBSVolumeSize 25 - KeyName 26 - BootstrapArguments 27 - DisableIMDSv1 28 - Label: 29 default: Worker Network Configuration 30 Parameters: 31 - VpcId 32 - Subnets 33 34 Parameters: 35 BootstrapArguments: 36 Type: String 37 Default: "" 38 Description: "Arguments to pass to the bootstrap script. See files/bootstrap.sh in https://github.com/awslabs/amazon-eks-ami" 39 40 ClusterControlPlaneSecurityGroup: 41 Type: "AWS::EC2::SecurityGroup::Id" 42 Description: The security group of the cluster control plane. 43 44 ClusterName: 45 Type: String 46 Description: The cluster name provided when the cluster was created. If it is incorrect, nodes will not be able to join the cluster. 47 48 KeyName: 49 Type: "AWS::EC2::KeyPair::KeyName" 50 Description: The EC2 Key Pair to allow SSH access to the instances 51 52 NodeAutoScalingGroupDesiredCapacity: 53 Type: Number 54 Default: 3 55 Description: Desired capacity of Node Group ASG. 56 57 NodeAutoScalingGroupMaxSize: 58 Type: Number 59 Default: 4 60 Description: Maximum size of Node Group ASG. Set to at least 1 greater than NodeAutoScalingGroupDesiredCapacity. 61 62 NodeAutoScalingGroupMinSize: 63 Type: Number 64 Default: 1 65 Description: Minimum size of Node Group ASG. 66 67 NodeGroupName: 68 Type: String 69 Description: Unique identifier for the Node Group. 70 71 NodeImageId: 72 Type: String 73 Default: "" 74 Description: (Optional) Specify your own custom image ID. This value overrides any AWS Systems Manager Parameter Store value specified above. 75 76 NodeImageIdSSMParam: 77 Type: "AWS::SSM::Parameter::Value<AWS::EC2::Image::Id>" 78 Default: /aws/service/eks/optimized-ami/1.17/amazon-linux-2/recommended/image_id 79 Description: AWS Systems Manager Parameter Store parameter of the AMI ID for the worker node instances. Change this value to match the version of Kubernetes you are using. 80 81 DisableIMDSv1: 82 Type: String 83 Default: "true" 84 AllowedValues: 85 - "false" 86 - "true" 87 88 NodeInstanceType: 89 Type: String 90 Default: t3.medium 91 AllowedValues: 92 - a1.2xlarge 93 - a1.4xlarge 94 - a1.large 95 - a1.medium 96 - a1.metal 97 - a1.xlarge 98 - c1.medium 99 - c1.xlarge 100 - c3.2xlarge 101 - c3.4xlarge 102 - c3.8xlarge 103 - c3.large 104 - c3.xlarge 105 - c4.2xlarge 106 - c4.4xlarge 107 - c4.8xlarge 108 - c4.large 109 - c4.xlarge 110 - c5.12xlarge 111 - c5.18xlarge 112 - c5.24xlarge 113 - c5.2xlarge 114 - c5.4xlarge 115 - c5.9xlarge 116 - c5.large 117 - c5.metal 118 - c5.xlarge 119 - c5a.12xlarge 120 - c5a.16xlarge 121 - c5a.24xlarge 122 - c5a.2xlarge 123 - c5a.4xlarge 124 - c5a.8xlarge 125 - c5a.large 126 - c5a.metal 127 - c5a.xlarge 128 - c5ad.12xlarge 129 - c5ad.16xlarge 130 - c5ad.24xlarge 131 - c5ad.2xlarge 132 - c5ad.4xlarge 133 - c5ad.8xlarge 134 - c5ad.large 135 - c5ad.metal 136 - c5ad.xlarge 137 - c5d.12xlarge 138 - c5d.18xlarge 139 - c5d.24xlarge 140 - c5d.2xlarge 141 - c5d.4xlarge 142 - c5d.9xlarge 143 - c5d.large 144 - c5d.metal 145 - c5d.xlarge 146 - c5n.18xlarge 147 - c5n.2xlarge 148 - c5n.4xlarge 149 - c5n.9xlarge 150 - c5n.large 151 - c5n.metal 152 - c5n.xlarge 153 - c6g.12xlarge 154 - c6g.16xlarge 155 - c6g.2xlarge 156 - c6g.4xlarge 157 - c6g.8xlarge 158 - c6g.large 159 - c6g.medium 160 - c6g.metal 161 - c6g.xlarge 162 - c6gd.12xlarge 163 - c6gd.16xlarge 164 - c6gd.2xlarge 165 - c6gd.4xlarge 166 - c6gd.8xlarge 167 - c6gd.large 168 - c6gd.medium 169 - c6gd.metal 170 - c6gd.xlarge 171 - cc2.8xlarge 172 - cr1.8xlarge 173 - d2.2xlarge 174 - d2.4xlarge 175 - d2.8xlarge 176 - d2.xlarge 177 - f1.16xlarge 178 - f1.2xlarge 179 - f1.4xlarge 180 - g2.2xlarge 181 - g2.8xlarge 182 - g3.16xlarge 183 - g3.4xlarge 184 - g3.8xlarge 185 - g3s.xlarge 186 - g4dn.12xlarge 187 - g4dn.16xlarge 188 - g4dn.2xlarge 189 - g4dn.4xlarge 190 - g4dn.8xlarge 191 - g4dn.metal 192 - g4dn.xlarge 193 - h1.16xlarge 194 - h1.2xlarge 195 - h1.4xlarge 196 - h1.8xlarge 197 - hs1.8xlarge 198 - i2.2xlarge 199 - i2.4xlarge 200 - i2.8xlarge 201 - i2.xlarge 202 - i3.16xlarge 203 - i3.2xlarge 204 - i3.4xlarge 205 - i3.8xlarge 206 - i3.large 207 - i3.metal 208 - i3.xlarge 209 - i3en.12xlarge 210 - i3en.24xlarge 211 - i3en.2xlarge 212 - i3en.3xlarge 213 - i3en.6xlarge 214 - i3en.large 215 - i3en.metal 216 - i3en.xlarge 217 - inf1.24xlarge 218 - inf1.2xlarge 219 - inf1.6xlarge 220 - inf1.xlarge 221 - m1.large 222 - m1.medium 223 - m1.small 224 - m1.xlarge 225 - m2.2xlarge 226 - m2.4xlarge 227 - m2.xlarge 228 - m3.2xlarge 229 - m3.large 230 - m3.medium 231 - m3.xlarge 232 - m4.10xlarge 233 - m4.16xlarge 234 - m4.2xlarge 235 - m4.4xlarge 236 - m4.large 237 - m4.xlarge 238 - m5.12xlarge 239 - m5.16xlarge 240 - m5.24xlarge 241 - m5.2xlarge 242 - m5.4xlarge 243 - m5.8xlarge 244 - m5.large 245 - m5.metal 246 - m5.xlarge 247 - m5a.12xlarge 248 - m5a.16xlarge 249 - m5a.24xlarge 250 - m5a.2xlarge 251 - m5a.4xlarge 252 - m5a.8xlarge 253 - m5a.large 254 - m5a.xlarge 255 - m5ad.12xlarge 256 - m5ad.16xlarge 257 - m5ad.24xlarge 258 - m5ad.2xlarge 259 - m5ad.4xlarge 260 - m5ad.8xlarge 261 - m5ad.large 262 - m5ad.xlarge 263 - m5d.12xlarge 264 - m5d.16xlarge 265 - m5d.24xlarge 266 - m5d.2xlarge 267 - m5d.4xlarge 268 - m5d.8xlarge 269 - m5d.large 270 - m5d.metal 271 - m5d.xlarge 272 - m5dn.12xlarge 273 - m5dn.16xlarge 274 - m5dn.24xlarge 275 - m5dn.2xlarge 276 - m5dn.4xlarge 277 - m5dn.8xlarge 278 - m5dn.large 279 - m5dn.xlarge 280 - m5n.12xlarge 281 - m5n.16xlarge 282 - m5n.24xlarge 283 - m5n.2xlarge 284 - m5n.4xlarge 285 - m5n.8xlarge 286 - m5n.large 287 - m5n.xlarge 288 - m6g.12xlarge 289 - m6g.16xlarge 290 - m6g.2xlarge 291 - m6g.4xlarge 292 - m6g.8xlarge 293 - m6g.large 294 - m6g.medium 295 - m6g.metal 296 - m6g.xlarge 297 - m6gd.12xlarge 298 - m6gd.16xlarge 299 - m6gd.2xlarge 300 - m6gd.4xlarge 301 - m6gd.8xlarge 302 - m6gd.large 303 - m6gd.medium 304 - m6gd.metal 305 - m6gd.xlarge 306 - p2.16xlarge 307 - p2.8xlarge 308 - p2.xlarge 309 - p3.16xlarge 310 - p3.2xlarge 311 - p3.8xlarge 312 - p3dn.24xlarge 313 - p4d.24xlarge 314 - r3.2xlarge 315 - r3.4xlarge 316 - r3.8xlarge 317 - r3.large 318 - r3.xlarge 319 - r4.16xlarge 320 - r4.2xlarge 321 - r4.4xlarge 322 - r4.8xlarge 323 - r4.large 324 - r4.xlarge 325 - r5.12xlarge 326 - r5.16xlarge 327 - r5.24xlarge 328 - r5.2xlarge 329 - r5.4xlarge 330 - r5.8xlarge 331 - r5.large 332 - r5.metal 333 - r5.xlarge 334 - r5a.12xlarge 335 - r5a.16xlarge 336 - r5a.24xlarge 337 - r5a.2xlarge 338 - r5a.4xlarge 339 - r5a.8xlarge 340 - r5a.large 341 - r5a.xlarge 342 - r5ad.12xlarge 343 - r5ad.16xlarge 344 - r5ad.24xlarge 345 - r5ad.2xlarge 346 - r5ad.4xlarge 347 - r5ad.8xlarge 348 - r5ad.large 349 - r5ad.xlarge 350 - r5d.12xlarge 351 - r5d.16xlarge 352 - r5d.24xlarge 353 - r5d.2xlarge 354 - r5d.4xlarge 355 - r5d.8xlarge 356 - r5d.large 357 - r5d.metal 358 - r5d.xlarge 359 - r5dn.12xlarge 360 - r5dn.16xlarge 361 - r5dn.24xlarge 362 - r5dn.2xlarge 363 - r5dn.4xlarge 364 - r5dn.8xlarge 365 - r5dn.large 366 - r5dn.xlarge 367 - r5n.12xlarge 368 - r5n.16xlarge 369 - r5n.24xlarge 370 - r5n.2xlarge 371 - r5n.4xlarge 372 - r5n.8xlarge 373 - r5n.large 374 - r5n.xlarge 375 - r6g.12xlarge 376 - r6g.16xlarge 377 - r6g.2xlarge 378 - r6g.4xlarge 379 - r6g.8xlarge 380 - r6g.large 381 - r6g.medium 382 - r6g.metal 383 - r6g.xlarge 384 - r6gd.12xlarge 385 - r6gd.16xlarge 386 - r6gd.2xlarge 387 - r6gd.4xlarge 388 - r6gd.8xlarge 389 - r6gd.large 390 - r6gd.medium 391 - r6gd.metal 392 - r6gd.xlarge 393 - t1.micro 394 - t2.2xlarge 395 - t2.large 396 - t2.medium 397 - t2.micro 398 - t2.nano 399 - t2.small 400 - t2.xlarge 401 - t3.2xlarge 402 - t3.large 403 - t3.medium 404 - t3.micro 405 - t3.nano 406 - t3.small 407 - t3.xlarge 408 - t3a.2xlarge 409 - t3a.large 410 - t3a.medium 411 - t3a.micro 412 - t3a.nano 413 - t3a.small 414 - t3a.xlarge 415 - t4g.2xlarge 416 - t4g.large 417 - t4g.medium 418 - t4g.micro 419 - t4g.nano 420 - t4g.small 421 - t4g.xlarge 422 - u-12tb1.metal 423 - u-18tb1.metal 424 - u-24tb1.metal 425 - u-6tb1.metal 426 - u-9tb1.metal 427 - x1.16xlarge 428 - x1.32xlarge 429 - x1e.16xlarge 430 - x1e.2xlarge 431 - x1e.32xlarge 432 - x1e.4xlarge 433 - x1e.8xlarge 434 - x1e.xlarge 435 - z1d.12xlarge 436 - z1d.2xlarge 437 - z1d.3xlarge 438 - z1d.6xlarge 439 - z1d.large 440 - z1d.metal 441 - z1d.xlarge 442 ConstraintDescription: Must be a valid EC2 instance type 443 Description: EC2 instance type for the node instances 444 445 NodeVolumeSize: 446 Type: Number 447 Default: 20 448 Description: Node volume size 449 450 NodeExtraEBSVolumeSize: 451 Type: Number 452 Default: 0 453 Description: Extra EBS volume size 454 455 Subnets: 456 Type: "List<AWS::EC2::Subnet::Id>" 457 Description: The subnets where workers can be created. 458 459 VpcId: 460 Type: "AWS::EC2::VPC::Id" 461 Description: The VPC of the worker instances 462 463 Mappings: 464 PartitionMap: 465 aws: 466 EC2ServicePrincipal: "ec2.amazonaws.com" 467 aws-us-gov: 468 EC2ServicePrincipal: "ec2.amazonaws.com" 469 aws-cn: 470 EC2ServicePrincipal: "ec2.amazonaws.com.cn" 471 aws-iso: 472 EC2ServicePrincipal: "ec2.c2s.ic.gov" 473 aws-iso-b: 474 EC2ServicePrincipal: "ec2.sc2s.sgov.gov" 475 476 Conditions: 477 HasNodeImageId: !Not 478 - "Fn::Equals": 479 - !Ref NodeImageId 480 - "" 481 482 IMDSv1Disabled: 483 "Fn::Equals": 484 - !Ref DisableIMDSv1 485 - "true" 486 487 HasNodeExtraDisk: !Not 488 - "Fn::Equals": 489 - !Ref NodeExtraEBSVolumeSize 490 - 0 491 492 493 Resources: 494 NodeInstanceRole: 495 Type: "AWS::IAM::Role" 496 Properties: 497 AssumeRolePolicyDocument: 498 Version: "2012-10-17" 499 Statement: 500 - Effect: Allow 501 Principal: 502 Service: 503 - !FindInMap [PartitionMap, !Ref "AWS::Partition", EC2ServicePrincipal] 504 Action: 505 - "sts:AssumeRole" 506 ManagedPolicyArns: 507 - !Sub "arn:${AWS::Partition}:iam::aws:policy/AmazonEKSWorkerNodePolicy" 508 - !Sub "arn:${AWS::Partition}:iam::aws:policy/AmazonEKS_CNI_Policy" 509 - !Sub "arn:${AWS::Partition}:iam::aws:policy/AmazonEC2ContainerRegistryReadOnly" 510 Path: / 511 512 NodeInstanceProfile: 513 Type: "AWS::IAM::InstanceProfile" 514 Properties: 515 Path: / 516 Roles: 517 - !Ref NodeInstanceRole 518 519 NodeSecurityGroup: 520 Type: "AWS::EC2::SecurityGroup" 521 Properties: 522 GroupDescription: Security group for all nodes in the cluster 523 Tags: 524 - Key: !Sub kubernetes.io/cluster/${ClusterName} 525 Value: owned 526 VpcId: !Ref VpcId 527 528 NodeSecurityGroupIngress: 529 Type: "AWS::EC2::SecurityGroupIngress" 530 DependsOn: NodeSecurityGroup 531 Properties: 532 Description: Allow node to communicate with each other 533 FromPort: 0 534 GroupId: !Ref NodeSecurityGroup 535 IpProtocol: "-1" 536 SourceSecurityGroupId: !Ref NodeSecurityGroup 537 ToPort: 65535 538 539 ClusterControlPlaneSecurityGroupIngress: 540 Type: "AWS::EC2::SecurityGroupIngress" 541 DependsOn: NodeSecurityGroup 542 Properties: 543 Description: Allow pods to communicate with the cluster API Server 544 FromPort: 443 545 GroupId: !Ref ClusterControlPlaneSecurityGroup 546 IpProtocol: tcp 547 SourceSecurityGroupId: !Ref NodeSecurityGroup 548 ToPort: 443 549 550 ControlPlaneEgressToNodeSecurityGroup: 551 Type: "AWS::EC2::SecurityGroupEgress" 552 DependsOn: NodeSecurityGroup 553 Properties: 554 Description: Allow the cluster control plane to communicate with worker Kubelet and pods 555 DestinationSecurityGroupId: !Ref NodeSecurityGroup 556 FromPort: 1025 557 GroupId: !Ref ClusterControlPlaneSecurityGroup 558 IpProtocol: tcp 559 ToPort: 65535 560 561 ControlPlaneEgressToNodeSecurityGroupOn443: 562 Type: "AWS::EC2::SecurityGroupEgress" 563 DependsOn: NodeSecurityGroup 564 Properties: 565 Description: Allow the cluster control plane to communicate with pods running extension API servers on port 443 566 DestinationSecurityGroupId: !Ref NodeSecurityGroup 567 FromPort: 443 568 GroupId: !Ref ClusterControlPlaneSecurityGroup 569 IpProtocol: tcp 570 ToPort: 443 571 572 NodeSecurityGroupFromControlPlaneIngress: 573 Type: "AWS::EC2::SecurityGroupIngress" 574 DependsOn: NodeSecurityGroup 575 Properties: 576 Description: Allow worker Kubelets and pods to receive communication from the cluster control plane 577 FromPort: 1025 578 GroupId: !Ref NodeSecurityGroup 579 IpProtocol: tcp 580 SourceSecurityGroupId: !Ref ClusterControlPlaneSecurityGroup 581 ToPort: 65535 582 583 NodeSecurityGroupFromControlPlaneOn443Ingress: 584 Type: "AWS::EC2::SecurityGroupIngress" 585 DependsOn: NodeSecurityGroup 586 Properties: 587 Description: Allow pods running extension API servers on port 443 to receive communication from cluster control plane 588 FromPort: 443 589 GroupId: !Ref NodeSecurityGroup 590 IpProtocol: tcp 591 SourceSecurityGroupId: !Ref ClusterControlPlaneSecurityGroup 592 ToPort: 443 593 594 NodeLaunchTemplate: 595 Type: "AWS::EC2::LaunchTemplate" 596 Properties: 597 LaunchTemplateData: 598 BlockDeviceMappings: !If 599 - HasNodeExtraDisk 600 - - DeviceName: /dev/xvda 601 Ebs: 602 DeleteOnTermination: true 603 VolumeSize: !Ref NodeVolumeSize 604 VolumeType: gp2 605 - DeviceName: /dev/sdf 606 Ebs: 607 DeleteOnTermination: true 608 VolumeSize: !Ref NodeExtraEBSVolumeSize 609 VolumeType: gp2 610 - - DeviceName: /dev/xvda 611 Ebs: 612 DeleteOnTermination: true 613 VolumeSize: !Ref NodeVolumeSize 614 VolumeType: gp2 615 616 IamInstanceProfile: 617 Arn: !GetAtt NodeInstanceProfile.Arn 618 ImageId: !If 619 - HasNodeImageId 620 - !Ref NodeImageId 621 - !Ref NodeImageIdSSMParam 622 InstanceType: !Ref NodeInstanceType 623 KeyName: !Ref KeyName 624 SecurityGroupIds: 625 - !Ref NodeSecurityGroup 626 UserData: !Base64 627 "Fn::Sub": | 628 #!/bin/bash 629 set -o xtrace 630 /etc/eks/bootstrap.sh ${ClusterName} ${BootstrapArguments} 631 /opt/aws/bin/cfn-signal --exit-code $? \ 632 --stack ${AWS::StackName} \ 633 --resource NodeGroup \ 634 --region ${AWS::Region} 635 MetadataOptions: 636 HttpPutResponseHopLimit : 1 637 HttpEndpoint: enabled 638 HttpTokens: !If 639 - IMDSv1Disabled 640 - required 641 - optional 642 643 NodeGroup: 644 Type: "AWS::AutoScaling::AutoScalingGroup" 645 Properties: 646 DesiredCapacity: !Ref NodeAutoScalingGroupDesiredCapacity 647 LaunchTemplate: 648 LaunchTemplateId: !Ref NodeLaunchTemplate 649 Version: !GetAtt NodeLaunchTemplate.LatestVersionNumber 650 MaxSize: !Ref NodeAutoScalingGroupMaxSize 651 MinSize: !Ref NodeAutoScalingGroupMinSize 652 Tags: 653 - Key: Name 654 PropagateAtLaunch: true 655 Value: !Sub ${ClusterName}-${NodeGroupName}-Node 656 - Key: !Sub kubernetes.io/cluster/${ClusterName} 657 PropagateAtLaunch: true 658 Value: owned 659 VPCZoneIdentifier: !Ref Subnets 660 UpdatePolicy: 661 AutoScalingRollingUpdate: 662 MaxBatchSize: 1 663 MinInstancesInService: !Ref NodeAutoScalingGroupDesiredCapacity 664 PauseTime: PT5M 665 666 Outputs: 667 NodeInstanceRole: 668 Description: The node instance role 669 Value: !GetAtt NodeInstanceRole.Arn 670 671 NodeSecurityGroup: 672 Description: The security group for the node group 673 Value: !Ref NodeSecurityGroup 674 675 NodeAutoScalingGroup: 676 Description: The autoscaling group 677 Value: !Ref NodeGroup Go to AWS CloudFormation Console.

Click the Create stack dropdown menu and select with new resources(standard).

In Specify template choose Upload a template file and upload the

amazon-eks-nodegroup.yamlfile you downloaded in the first step. Then click Next.In section Stack name pick a name for the stack, for example,

<EKS_CLUSTER>-workers, where<EKS_CLUSTER>is the name of your cluster.In section Parameters specify the name of the EKS cluster you created previously.

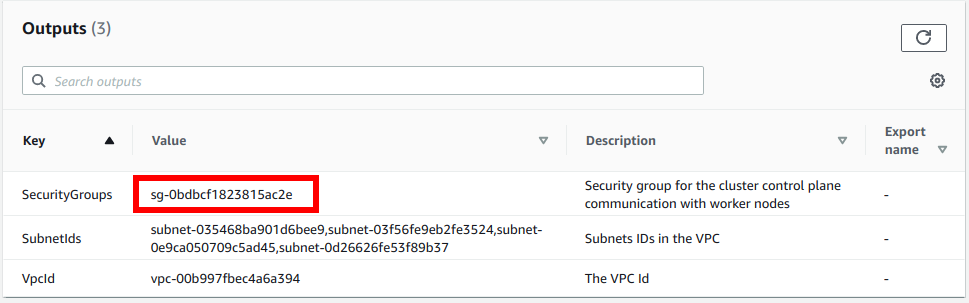

For ClusterControlPlaneSecurityGroup, select the security group created with your VPC CloudFormation stack. To find it, go to AWS CloudFormation Console, select the CF stack of your VPC, go to Outputs, and note down the SecurityGroups value:

This is the security group to allow communication between your worker nodes and the Kubernetes control plane.

Pick a name for the node group, for example,

general-workers. The created instances will be named after<EKS_CLUSTER>-<NODEGROUPNAME>-Node.In NodeInstanceType select your desired instance type. We recommend that you use an instance type that has instance store volumes (local NVMe storage) attached, such as

m5d.4xlarge.Set NodeImageIdSSMParam by choosing one of the following options based on the Kubernetes version of your cluster.

/aws/service/eks/optimized-ami/1.23/amazon-linux-2/amazon-eks-node-1.23-v20221112/image_id/aws/service/eks/optimized-ami/1.22/amazon-linux-2/amazon-eks-node-1.22-v20221112/image_id/aws/service/eks/optimized-ami/1.21/amazon-linux-2/amazon-eks-node-1.21-v20221112/image_idSet a large enough NodeVolumeSize, for example, 200 (GB) since this will hold Docker images and ephemeral storage of Pods.

If the instance type you chose is EBS-only (that is, it doesn’t have ephemeral storage attached), set a large enough NodeExtraEBSVolumeSize, for example, 500 (GB) since this will hold your Rok storage.

Under KeyName, specify an EC2 key pair to allow SSH access to the instances. This option is required by the CloudFormation template that we are using.

Ensure that DisableIMDSv1 is set to true so that worker nodes use IMDSv2 only.

Select the VPC, and the subnets to spawn the workers in. If you use EBS volumes, we highly recommend that the Auto Scaling group (ASG) spans a single Availability zone. Choose them from the given drop down list. Then click Next.

In Configure stack options, add the following Tags that Cluster Autoscaler requires so that it can discover the instances of the ASG automatically. Then click Next.

Key Value k8s.io/cluster-autoscaler/enabled true k8s.io/cluster-autoscaler/<EKS_CLUSTER> owned Review your CloudFormation stack to make sure you have configured it as instructed above. Check the necessary boxes and click Create stack.

Note

CloudFormation will

- Create an IAM role that worker nodes will consume.

- Create an AutoScalingGroup with a new Launch Template.

- Create a security group that the worker nodes will use.

- Modify given cluster security group to allow communication between control plane and worker nodes.

After the stack has finished creating, you need to allow the nodes to join the cluster. Go to your GitOps repository, inside your

rok-toolsmanagement environment:root@rok-tools:~# cd ~/ops/deploymentsSpecify the CloudFormation stack name you used for creating the node group:

root@rok-tools:~/ops/deployments# export CF_STACK_NAME=<CF_STACK_NAME>Replace

<CF_STACK_NAME>with the your node group’s CF stack, for example:root@rok-tools:~/ops/deployments# export CF_STACK_NAME=arrikto-cluster-workersObtain the IAM role that CloudFormation created for the nodes to run with:

root@rok-tools:~/ops/deployments# aws cloudformation describe-stacks \ > --stack-name ${CF_STACK_NAME?} \ > --query 'Stacks[].Outputs[?OutputKey==`NodeInstanceRole`].OutputValue' \ > --output text arn:aws:iam::123456789012:role/arrikto-cluster-workers-NodeInstanceRole-1UR1Z9YTC2TTKInspect the

aws-authConfigMap to get existing entries if any:root@rok-tools:~/ops/deployments# kubectl get configmap aws-auth \ > -n kube-system -o jsonpath={.data.mapRoles}Edit

rok/eks/aws-auth.yamland add a new entry undermapRoles, including the entries you found in previous step:data: mapRoles: | - groups: - system:bootstrappers - system:nodes rolearn: arn:aws:iam::123456789012:role/arrikto-cluster-workers-NodeInstanceRole-1UR1Z9YTC2TTK username: system:node:{{EC2PrivateDNSName}}Commit your changes:

root@rok-tools:~/ops/deployments# git commit \ > -am "Create EKS Self-managed Node Group: Allow $CF_STACK_NAME to join the cluster"Apply the manifest:

root@rok-tools:~/ops/deployments# kubectl apply -f rok/eks/aws-auth.yaml

Verify¶

Go to your GitOps repository, inside your

rok-toolsmanagement environment:root@rok-tools:~# cd ~/ops/deploymentsRestore the required context from previous sections:

root@rok-tools:~/ops/deployments# source <(cat deploy/env.eks-cluster)root@rok-tools:~/ops/deployments# export EKS_CLUSTERVerify that EC2 instances have been created:

root@rok-tools:~/ops/deployments# aws ec2 describe-instances \ > --filters Name=tag-key,Values=kubernetes.io/cluster/${EKS_CLUSTER?} { "Reservations": [ { "Groups": [], "Instances": [ { "AmiLaunchIndex": 0, "ImageId": "ami-012b81faa674369fc", "InstanceId": "i-0a1795ed2c92c16d5", "InstanceType": "m5.large", "LaunchTime": "2021-07-27T08:39:41+00:00", "Monitoring": { "State": "disabled" }, "Placement": { "AvailabilityZone": "eu-central-1b", "GroupName": "", "Tenancy": "default" }, ...Verify that all EC2 instances use IMDSv2 only:

Retrieve the EC2 instance IDs of your cluster:

root@rok-tools:~/ops/deployments# aws ec2 describe-instances \ > --filters Name=tag:kubernetes.io/cluster/${EKS_CLUSTER?},Values=owned \ > --query "Reservations[*].Instances[*].InstanceId" --output text) i-075363bbf64a60e04 i-06a5ee72c6eed1badRepeat the steps below for each one of the EC2 instance IDs in the list of the previous step.

Specify the ID of the EC2 instance to operate on:

root@rok-tools:~/ops/deployments# export INSTANCE_ID=<INSTANCE_ID>Replace

<INSTANCE_ID>with one of the IDs you found in the previous step, for example:root@rok-tools:~/ops/deployments# export INSTANCE_ID=i-075363bbf64a60e04Verify that the

HttpTokensmetadata option is set torequired:root@rok-tools:~/ops/deployments# aws ec2 get-launch-template-data \ > --instance-id ${INSTANCE_ID?} \ > --query 'LaunchTemplateData.MetadataOptions.HttpTokens == `required`' trueVerify that the

HttpPutResponseHopLimitmetadata option is set to1:root@rok-tools:~/ops/deployments# aws ec2 get-launch-template-data \ > --instance-id ${INSTANCE_ID?} \ > --query 'LaunchTemplateData.MetadataOptions.HttpPutResponseHopLimit == `1`' trueGo back to step i and repeat steps i-iv for the remaining instance IDs.

Verify that Kubernetes nodes have appeared:

root@rok-tools:~/ops/deployments# kubectl get nodes NAME STATUS ROLES AGE VERSION ip-172-31-0-86.us-west-2.compute.internal Ready <none> 8m2s v1.23.13-eks-ba74326 ip-172-31-24-96.us-west-2.compute.internal Ready <none> 8m4s v1.23.13-eks-ba74326

Summary¶

You have successfully created a self-managed node group.

What’s Next¶

The next step to disable unsafe operations for your EKS cluster.